{{item.title}}

{{item.text}}

{{item.text}}

The accessibility and capability of Artificial Intelligence (AI) is evolving faster than at any moment in history, triggering a wave of reinvention and innovation across virtually every industry. Digital transformation doesn’t happen overnight, so the opportunity of moving early is significant, as is the risk of waiting.

For example, solutions that:

Identify and minimise the impact of cyber attacks.

Optimise the management of critical infrastructure to reduce carbon emissions.

Diagnose iIllnesses through analysis of medical imaging and patient data.

Detect fraud through analysis of trends in behavioural markers.

Provide financial advice analysis of market performance and investor profiles.

Financial and energy trading through rules based trading and market arbitrage.

Create content and media through generative AI.

Drive vehicles through real time position / environment sensing and navigation.

From healthcare to telecommunications, critical infrastructure, banking and government services, PwC has identified over 50 use cases where AI is already being used by organisations to gain competitive advantage and differentiate their products and services.

For the organisations that apply it wisely, AI has the potential to save time and money, improve products and services and disrupt industries. But moving quickly on AI research and development only pays dividends if organisations are also ready and able to effectively navigate the risks of bleeding edge technology. By investing in AI risk management and governance early, organisations can avoid needing to put the brakes on AI solutions later due to a lack of trust.

AI is more than hype - it is real, here to stay, and its potential is developing at an exponential rate. But like any decision model or automation, it needs to be sufficiently accurate, reliable, resilient and secure before it can be trusted.

Boards and Executives are operating behind glass walls and the principles of transparency and consent are essential for ensuring that your organisation is meeting community standards - especially in the absence of regulation or widely adopted best practice.

Recent history is littered with case studies where organisations did not meet consumers’ expectations of AI including gender discrimination in recruitment, vehicle crashes and fatalities resulting from autonomous driving, invasion of consumer privacy through use of AI enabled surveillance, and popular AI chatbots providing factually incorrect information and being ‘tricked’ into generating false news.

Implementing AI and advanced analytics solutions without the right safeguards in place can be costly. For example:

Non-compliance with data protection and privacy regulation (e.g. unlawful secondary uses of personal data).

Over-reliance on AI for automation and decision-making (e.g. applying models for use cases that demand high precision, with inadequate oversight and review).

Reputational damage by failing to meet community standards and customer expectations around the use of AI in products and services, and the use of personal data with AI.

Unlawful discrimination or harmful biases caused by unbalanced training data and/or incomplete review of model outputs.

Insufficient planning for operational resilience for business critical applications of AI.

Inaccurate insights or misinformed decisions due to quality issues with training data, model design, training approach or improper usage of the model.

Ambiguous intellectual property rights due to the use of generative AI that was trained on proprietary (third party owned) data.

So, how do you avoid the pitfalls and deliver AI solutions that you, your employees, customers, investors and regulators can trust?

Here are our top 3 tips for Boards and Executives.

Unify the organisation’s response to the opportunities and risks of AI with a clear position statement on AI automation that reflects the organisation’s appetite for risk, the organisation’s values and the environment that you’re operating in. Define a set of AI usage principles that help people understand and align with your values and risk appetite.

Empower the organisation to learn, innovate and explore safely with trusted AI environments and tools. This is essential to focus on early. If teams feel like they are constrained through bureaucracy and over-governance it could lead to what is arguably an even greater risk - ‘Shadow AI’ or ‘Shadow Automation’. Similar to ‘Shadow IT’, this is where AI automation is applied in siloed and unstrategic ways within pockets of an organisation to fulfil immediate needs, without central visibility or coordination. And that brings us to our second tip (below).

Questions to ask:

Have we defined our risk appetite for the use of emerging technology, AI and advanced analytics? Is it within our risk appetite not to use AI if our competitors are?

For which business functions, use cases and data assets are we comfortable using AI models, and with what degree of governance and oversight?

Conversational AI has renewed the topic of artificial intelligence and automation for many Boards and Executives, however the technology is not necessarily new and the opportunities span far broader.

In fact, most organisations are already using AI models and advanced analytics to make decisions, automate tasks and serve customers - sometimes in areas where they are unaware. For example, an organisation might have advanced analytics being developed outside of its formal governance process, a technology governance process that isn’t well-adapted to managing AI risks, or even a third party provider that’s using AI to deliver services without the right safeguards and ethical considerations in place.

In order to understand and respond to the risks of AI and advanced analytics, organisations first need to identify where it is being used, and what it is used for.

Where might an organisation already be relying on advanced analytics and AI models?

Questions to ask:

Where might we already be using AI within our organisation?

What are our current AI models being used for, and have we assessed the risks associated with those use cases?

What data is used by the model, and what business functions are supported by the model? Are we meeting our contractual and regulatory obligations as they relate to the use of data as well as the models themselves?

Is our current use of AI models in alignment with our risk appetite, AI principles and values?

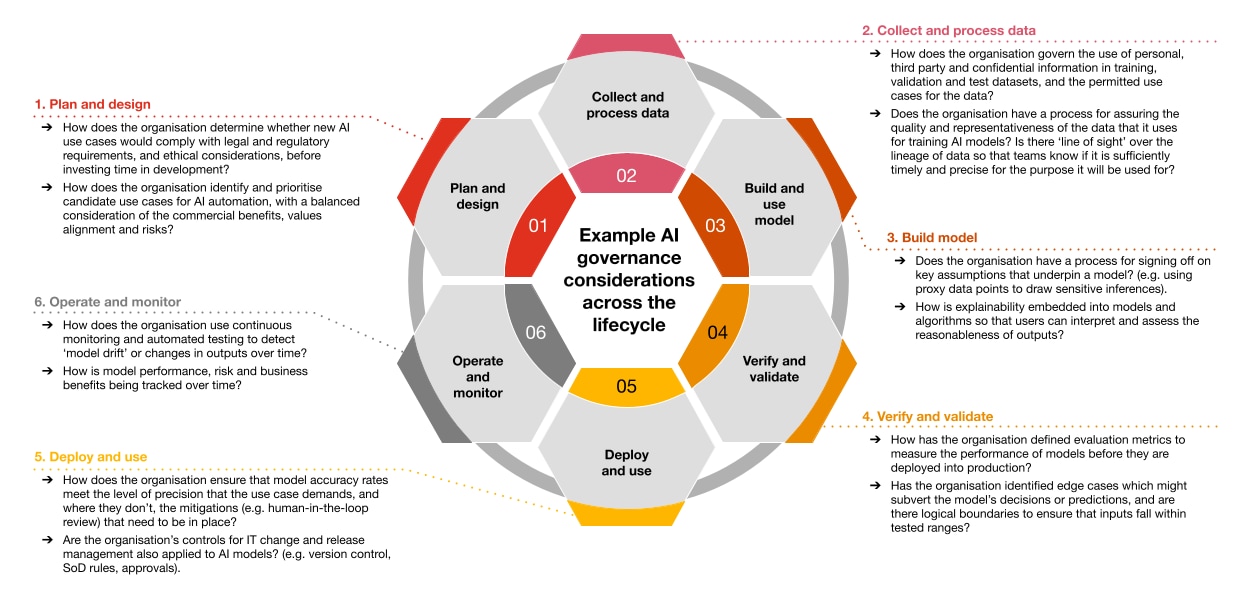

Rather than addressing compliance as an afterthought, design an AI governance framework that integrates your compliance requirements into the development and operating lifecycle for AI models. If done correctly, compliance-by-design can reduce friction between development and compliance, help you deploy safe solutions faster, and reduce the risk of rework from compliance issues that are detected after model design and development.

Every organisation is at a different stage on the AI governance journey, but most organisations are not starting from a blank sheet of paper. For example, organisations that have a mature technology and data governance framework in place will already have a foundation for AI governance.

How progressed is your organisation on its AI governance journey?

Source: PwC, based on the AI lifecycle stages from the NIST AI Risk Management Framework (1.0).

Questions to ask:

Do we have an AI governance framework in place?

Is our AI governance framework practical to follow? How reliant are we on manual controls to remain compliant?

How confidently can I answer the example governance questions for each AI lifecycle phase?

For the organisations that apply it wisely, AI and advanced analytics has the potential to save time and money, improve products and services and even strengthen reputations. But the approach should be human-led and tech-powered, rather than the other way around.

Generative AI tools push new boundaries for responsible AI: url.pwc.com/BzMZXO8

PwC’s Responsible AI Toolkit: AI you can trust: pwc.com/rai

NIST AI Risk Management Framework: nist.gov/itl/ai-risk-management-framework

OECD Framework for the Classification of AI Systems: https://www.oecd-ilibrary.org/science-and-technology/oecd-framework-for-the-classification-of-ai-systems_cb6d9eca-en

ISO23894 - Artificial Intelligence - Guidance on risk management: https://www.iso.org/standard/77304.html

{{item.text}}

{{item.text}}